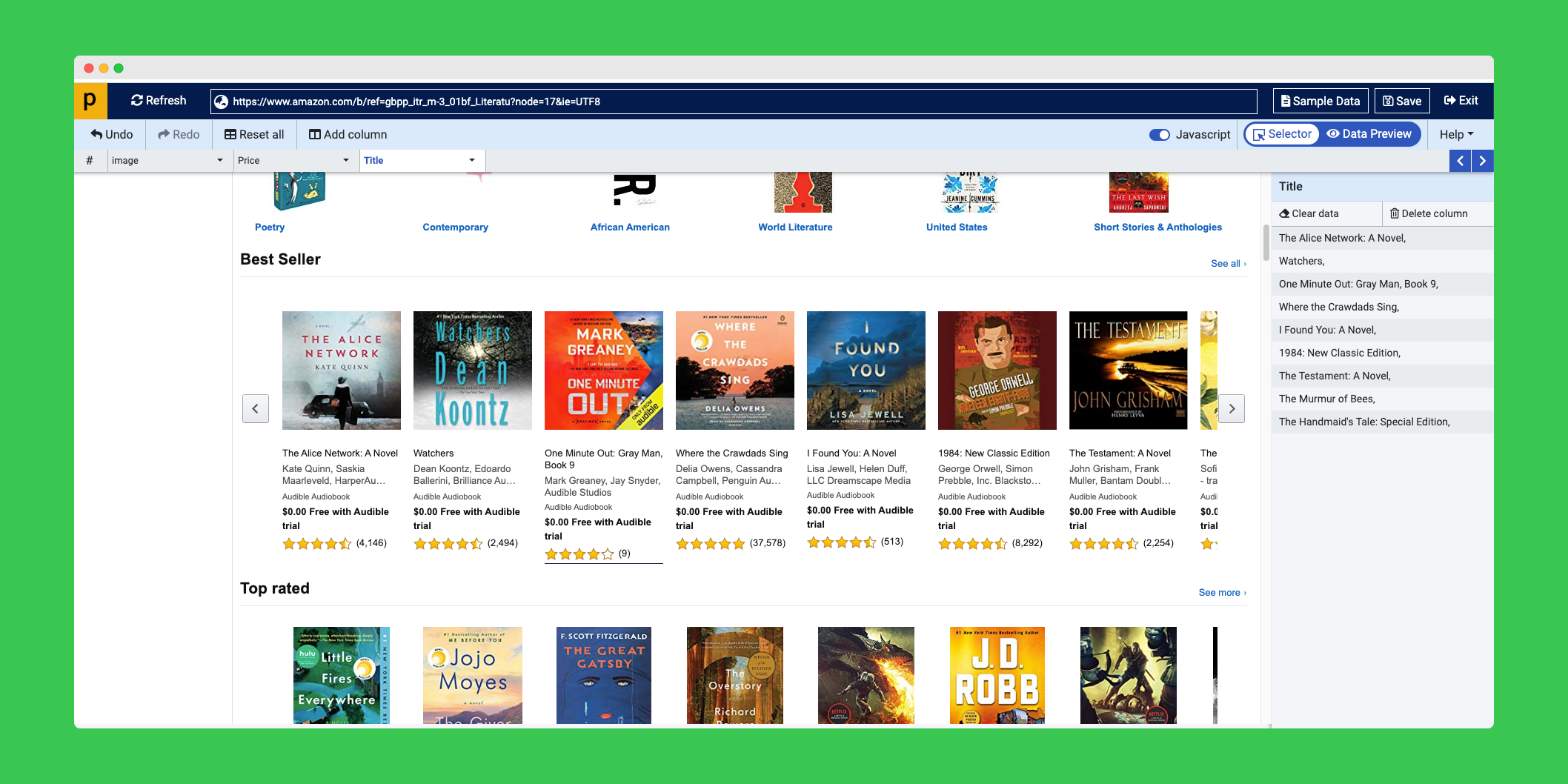

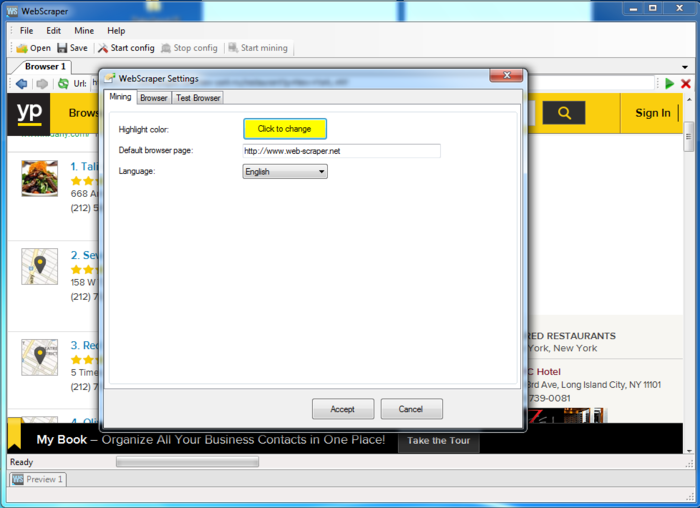

Resource URL - this is the URL the web scraper found the resource at.Open this file in a web browser and you will find a HTML table with three columns: There will also be a special HTML page called data.html in the root of the directory. Next extract the ZIP file and inside located in a directory called Files will be all of the downloaded web pages and website resources. Once the scrape has finished you will get a ZIP file. On the Schedule Scrape tab, simply click the Repeat Scrape checkbox and then select how frequently you want the scrape to repeat. One alteration would be to run the scrape on a regular schedule, for instance, to create regular copies of a website. If you want to alter the template, uncheck the Automatically Start Scrape checkbox. Keep the Automatically Start Scrape checkbox ticked, and your scrape will automatically start. Then enter your Target URL, this URL is then automatically checked for errors and any required changes made. To make downloading your website as easy as possible GrabzIt provides a scrape template. Create a Scrape to Download an Entire Website Then on each web page the scraper downloads the HTML along with any resources referenced on the page. Or perhaps you want a detailed record of how a website has changed over time.įortunately GrabzIt’s Web Scraper can achieve this by crawling over all of the web pages on a website. This maybe because you want a backup of the code but can no longer get to the original source for some reason. But HTML web pages, resources such as CSS, scripts and images. There are some instances when it is important to download an entire website, not just the finished result. Want to try it? Feel free to fork, clone, and star it on my Github.How to download a website and all of its content? Print( " \n \n Unable to Download A File \n") Og_url = html_page.find( "meta", property = "og:url") Here’s what my overall code looked like: def check_validity( my_url): Why waste hours downloading files manually when you can copy-paste a link and let Python do its magic? Upon appending the current link to it, I could easily get the exact link for my PDF file.Īnd there it was! My very own notes downloading web scraping tool. Since I had already parsed the URL, I knew its scheme and netloc. Hence, the links had to be dealt with differently. While trying to download PDFs from another website, I realised that the source codes were different. Links.append(base.scheme "://" loc current_link) While the current link was p5.pdf.When appended together, I got the exact link for a PDF file: For example, the org_url looked like this: So to get a full-fledged link for each PDF file, I extracted the main URL using the content tag and appended my current link to it. Now the current_links looked like p1.pdf, p2.pdf etc. If og_urlwas present, it meant that the link is from a cnds web page, and not Grader. If the link led to a pdf file, I further checked whether the og_url was present or not. Next, I checked if the link ended with a. If you know HTML, you would know that the tag is used for links.įirst I obtained the links using the href property. Now that I had the HTML source code, I needed to find the exact links to all the PDF files present on that web page. Now, I knew the scheme, netloc (main website address), and the path of the web page. The results looked like this: ParseResult(scheme=’https’, netloc=’’, path=’/courses/os- 2019/’, params=’’, query=’’, fragment=’’)

Next, it was time to parse and evaluate the input URL. In order to get usable meta-data, I added this: og_url = html_page.find(“meta”, property = “og:url”)Īnd got something like this as a result: While another website had no og:title and had this instead: For example, one of the websites had this: Upon evaluating the HTML code of both, I realized that the content of their meta tags was slightly different. Now, I had two main websites from which I occasionally downloaded pdf files. In order to get a properly formatted and humanly readable HTML source code, I tried doing this with BeautifulSoup, which is a Python package for parsing HTML and XML documents: html_page = bs(html, features=”lxml”) However, when I tried to print it on my console, it wasn’t a pleasant sight. In Python, HTML of a web page can be read like this: html = urlopen(my_url).read() Otherwise, the link is invalid and the program is terminated. If it can be opened using urlopen, it is valid. Using a simple try-except block, I check if the URL entered is valid or not. The idea was to input a link, scrap its source code for all possible PDF files and then download them. This sounded like a fun automation task and since I was eager to get my hands dirty with web-scraping, I decided to give it a try. One fine day, a question popped up in my mind: why am I downloading all these files manually? That’s when I started searching for an automatic tool. Downloading hundreds of PDF files manually was…tiresome.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed